Geometric Interpretation of the Lagrange Multiplier

Optimization is essential in engineering, economics, and sciences. Often, we need to optimize a function under a constraint. Lagrange multipliers provide an effective way to solve such constrained optimization problems.

What are Lagrange Multipliers?

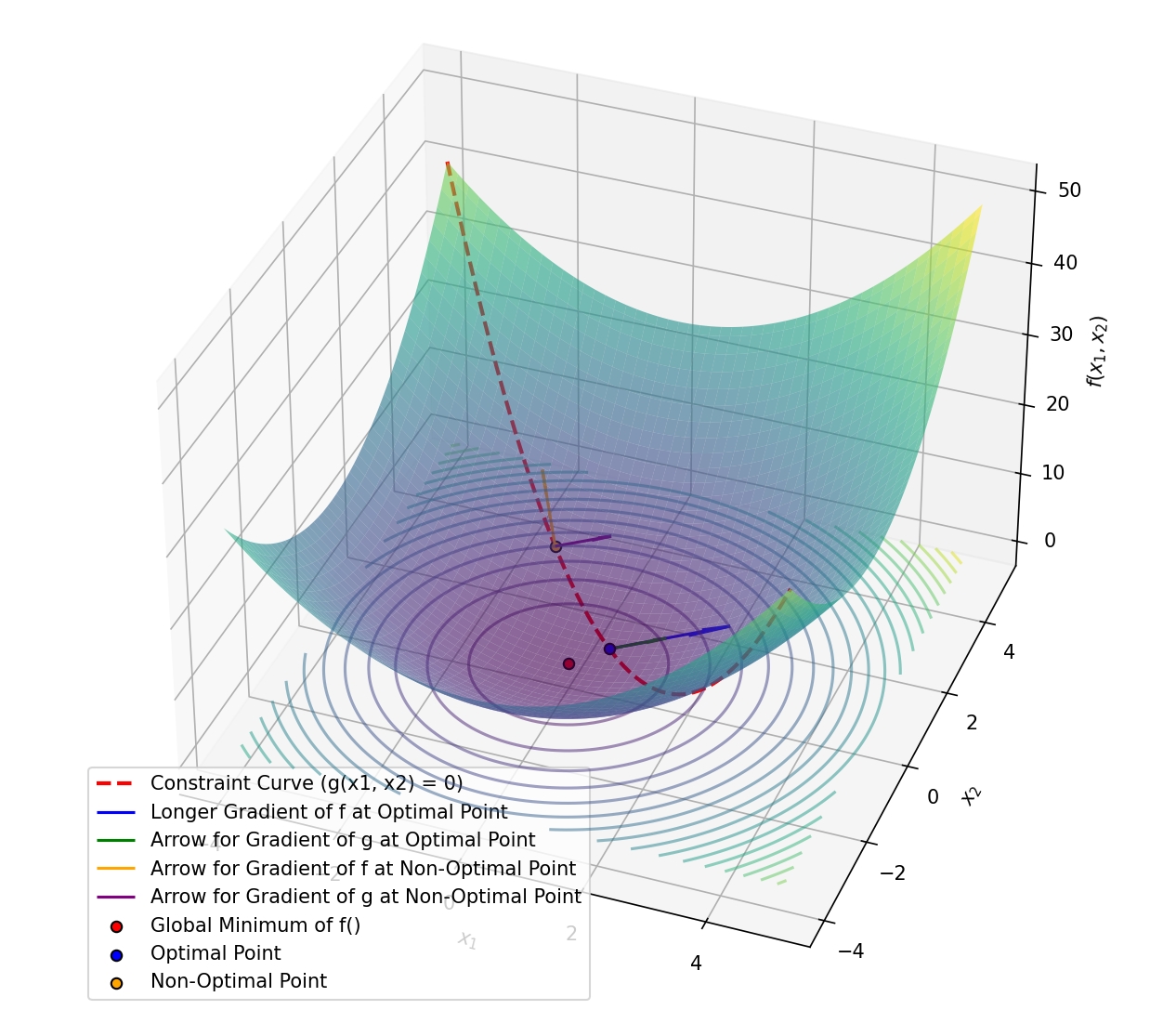

Suppose we want to minimize (or maximize) a function $f(x_1, x_2)$, but must satisfy a constraint $g(x_1, x_2) = 0$. The Lagrange multiplier method allows us to consider both the objective function and the constraint simultaneously.

The Geometric Idea

To minimize $f(x_1, x_2)$ subject to $g(x_1, x_2) = 0$, we need to ensure that, at the optimal point, the gradient of $f$ ($\nabla f$) is parallel to the gradient of $g$ ($\nabla g$). This means both gradients point in the same direction, indicating no further improvement is possible without violating the constraint. Mathematically:

\[\nabla f = \lambda \nabla g\]and transform it into the following system of equations:

\[\begin{cases} \frac{\partial f}{\partial x_1} + \lambda \frac{\partial g}{\partial x_1} = 0 \\ \frac{\partial f}{\partial x_2} + \lambda \frac{\partial g}{\partial x_2} = 0 \\ g(x_1, x_2) = 0 \end{cases}\]Here, $\lambda$ is the Lagrange multiplier, representing the proportional relationship between the gradients. At the optimal point, any movement along the constraint will change the value of $f$, and such a change will make the value no longer optimal, indicating that the current point is the best solution under the given condition.

Visualizing the Concept

-

Objective Function: The function $f(x_1, x_2) = x_1^2 + x_2^2$ represents a parabolic surface. The goal is to find the lowest point on this surface.

-

Constraint: The constraint $g(x_1, x_2) = x_1 + x_2 - 1 = 0$ is a line. The solution must lie along this line.

-

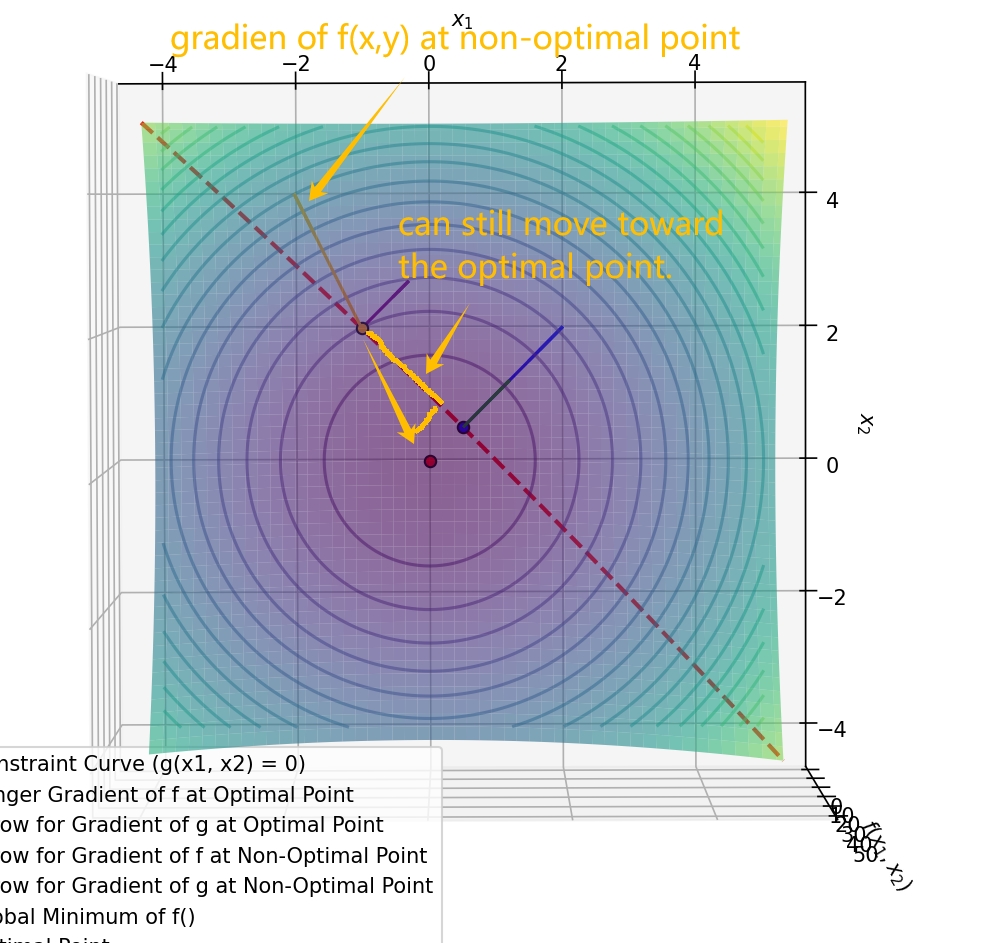

Gradient Alignment: At the optimal point, the gradient of $f$ is parallel to the gradient of $g$.

When the gradients are not aligned, we can decompose the gradient of $f$ into two components: one parallel to the constraint and one perpendicular to it. And if we move along the constraint, the value of $f$ will change,more specifically, it will decrease in this case. Like we mentioned in the previous post, the gradient direction is the steepest increase direction, so decomposeing the opposite direction will be the steepest decrease direction. Therefore, move along the constraint will decrease the value of $f$, indicating that the current point is not optimal.

Key Takeaways

- The Lagrange multiplier method finds the optimal solution by ensuring the alignment of the objective function’s gradient with the constraint.

- If the gradients are not aligned, we can decompose the gradient of the objective function into along the constraint direction and perpendicular to the constraint direction. Moving along the constraint will decrease (or increase) the value of $f$, indicating that the current point is not optimal.

☕ Happy learning journey~ 🛠️